Blind Spots in the SOC: Four Cognitive Biases That Make Analysts Miss What Matters

Why smart analysts reach wrong conclusions, and how to catch yourself

Your brain is working against you.

Not because you are a bad analyst. Not because you lack training or tools. But because you are human, and human brains take shortcuts. Those shortcuts kept our ancestors alive when a rustling bush might be a predator. In a SOC during an active incident, those same shortcuts make you miss things.

Cognitive biases are not personal flaws. They are features of how the human brain processes information under time pressure, uncertainty, and cognitive load. In cybersecurity, we rarely talk about this. We talk about tools, detections, frameworks, and playbooks. But we almost never talk about the human operating all of it.

This is part one of a series. Todays focus: The four biases I see hit SOC analysts the hardest, with real-world examples and practical ways to counter each one.

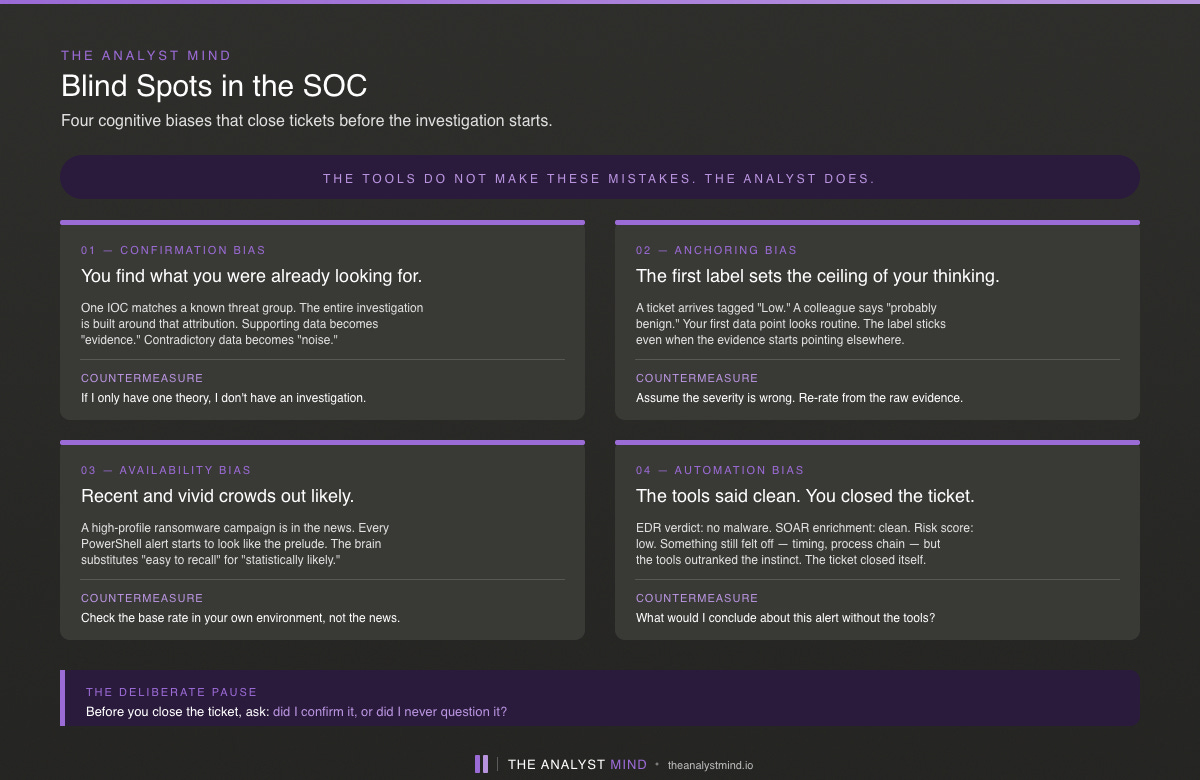

Confirmation Bias: Seeing What You Expect to See

You are investigating a suspicious authentication alert. Early in the triage, you find one indicator matching a known APT group. Your brain locks in: this is APT activity.

From that moment, everything you see gets filtered through that lens. Log entries that support the theory. Evidence. Log entries that contradict it. Noise, probably unrelated. You stop looking for alternative explanations because you already have one that fits.

The problem is that “fits” is not the same as “correct.” While you were building your APT case, you missed the data exfiltration that started three days before the alert fired. You missed it because you were not looking for it. You were looking for confirmation.

This is confirmation bias. The tendency to search for, interpret, and remember information that supports what you already believe, while ignoring or dismissing what contradicts it.

How to counter it: Before closing any case, ask yourself one question: “What evidence would prove me wrong, and have I looked for it?” If you cannot answer that, your investigation is not finished. In my training sessions, I teach the Analysis of Competing Hypotheses method. You must generate at least two competing explanations before settling on one. If you only have one theory, you do not have an investigation. You have a confirmation exercise.

Anchoring Bias: The First Data Point Wins

The SIEM flags an alert as “Critical.” You open the ticket, and before you have looked at a single log, your brain has already decided this is serious. That severity label has become your anchor.

Now everything you investigate gets measured against that anchor. Lower-severity alerts in the same timeframe? They seem less important by comparison. You deprioritize them. But the real initial access was buried in one of those lower-severity alerts three entries earlier. You missed it because the first thing you saw shaped everything that followed.

Anchoring bias is the tendency to rely too heavily on the first piece of information you encounter. That first data point, whether it is a severity score, an alert title, a colleague’s opinion, or the first IOC you find, it disproportionately influences every judgment that follows.

How to counter it: Always ask: “What if my first data point is wrong?” Start from the raw evidence, not from labels or scores. When investigating an alert chain, deliberately examine the full sequence rather than focusing on whichever alert triggered first. One technique I use in training: ban actor names and malware names from ticket titles. The moment you write “APT29 Investigation” at the top of your ticket, every analyst who touches it is anchored before they read a single log line.

Availability Bias: Recent Experience Distorts Your Judgment

Your team spent last week responding to a ransomware incident. It was painful, visible, and everyone remembers it. This week, an alert fires showing unusual file encryption activity on a server. Your gut says ransomware. It feels obvious.

But “feels obvious” is not analysis. What you are actually experiencing is availability bias, that is the tendency to judge the likelihood of something based on how easily examples come to mind, rather than on actual base rates.

Because ransomware is fresh in your memory, your brain overestimates the probability that this new alert is also ransomware. Meanwhile, the actual cause — a backup process using encryption that was recently reconfigured — gets overlooked because you are preconditioned.

This works in the other direction too. A high-profile APT campaign hits the news, and suddenly every lateral movement alert in your environment feels like nation-state activity. The base rate for nation-state attacks against your organization has not changed. But your perception of the risk has.

How to counter it: Check base rates. What does the monitoring in your environment actually show? Use historical data, not recent memory. When you catch yourself thinking “this looks just like last week,” pause and ask: “How common is this attack type in our environment, based on data, not based on what I remember?”

Automation Bias: Trusting the Tool Over Your Own Judgment

The EDR scans the endpoint. "No malware detected." The SOAR enrichment comes back clean. The risk score says "Low."

You see all of this. Something about the alert still feels off — maybe the timing, maybe the process chain. But the tools say it is clean. So you close the ticket and move on.

That is automation bias. Not the tool auto-closing the ticket without you seeing it. You closing the ticket because the tool told you it was fine, even though your instinct said otherwise.

The analyst is still in the loop. The analyst still makes the decision. But the decision is shaped by the tool’s verdict rather than by independent analysis. The tool becomes the authority, and the analyst becomes the one who clicks “close.”

This bias is going to get worse as LLMs enter the analyst workflow. An AI-generated investigation summary sounds confident and well-structured even when it is wrong. If you do not have the skills to critically evaluate that output, you have a bigger problem than alert fatigue.

How to counter it: Trust but verify. Build a habit of spot-checking automated verdicts. When a tool gives you a clean bill of health, ask yourself: “What would I decide if the tool did not exist?” If you cannot answer that, you are not augmenting your analysis with automation , you are replacing your analysis with automation.

What’s Next

These four biases are not the full picture. They are the ones I see most often in SOC environments. Knowing they exist is the first step. But awareness alone does not fix the problem, you need practical tools to fight them in real time.

In the next article, I will cover the W questions that structure your thinking from the first minute of an investigation. Continuing we build the full investigation template that ties it all together.

Remember: Biases are not something you fix once. They are something you manage every day.