Hypotheses

The Theories You Are Actually Testing

You are two hours into an investigation.

You have pulled authentication logs. You have pulled endpoint telemetry. You have checked the user directory, cross-referenced the asset database, queried the firewall. Your notes field is full. Your tabs are full. Your coffee is cold.

And if someone asked you right now - what do you think happened? - you would struggle to answer.

That is the moment most analysts mistake for investigation. It is not. It is browsing.

Evidence Without a Theory Is Just Data

Here is the uncomfortable truth about most SOC work. Analysts believe their job is to find evidence. To pull logs. To correlate indicators. To chase down the unusual thing until it resolves into something nameable.

That is not the job.

The job is to build and kill theories. The evidence only matters in relation to the theories it supports or contradicts. Without a hypothesis, you are not investigating, you are scrolling through data hoping the answer will surface itself. It rarely does. And when it does, you cannot explain why.

If you cannot state what you think happened in a single clear sentence, you are not investigating yet. You are preparing to investigate.

The First Hypothesis Is a Trap

So you force yourself to state a theory.

“The account was compromised via stolen credentials from a phishing email earlier this week.”

Now you have a hypothesis. And the moment you have one, something dangerous happens.

Your brain starts working for it, not against it. Every log line that fits the theory becomes “evidence.” Every log line that doesn’t fit becomes “noise.” You start searching for confirmation, and confirmation is cheap. If you only have one theory, every piece of matching data feels like progress. But you are no longer investigating. You are assembling a case for a verdict you have already reached.

This is the pattern the first post in this series named as confirmation bias. It hits every analyst, every day. The only reliable countermeasure is structural: refuse to work with a single hypothesis.

If you have one theory, you do not have an investigation. You have a confirmation exercise with extra steps.

The Minimum Is Two

The rule is simple. You need at least two competing hypotheses before you touch the evidence again.

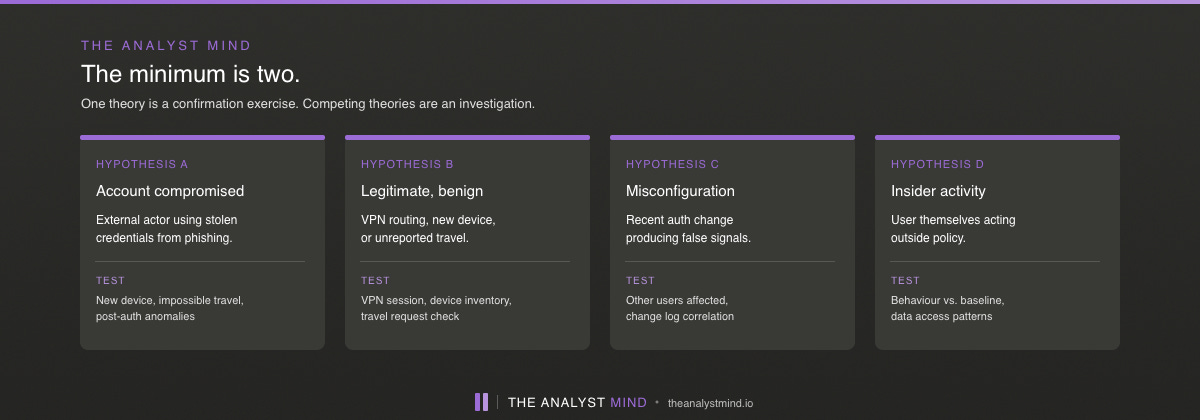

For any suspicious authentication alert, the starting pair might be:

Hypothesis A: The account is compromised. Credentials were stolen and are being used by an external actor.

Hypothesis B: The account is legitimate. The unusual pattern has a benign explanation — VPN routing, a new device, travel the user forgot to mention.

That is not enough. You push for a third.

Hypothesis C: Misconfiguration. A recent change to the authentication system is producing false signals.

And if you are serious, a fourth.

Hypothesis D: Insider activity. The user themselves is doing something they should not, using their own valid credentials.

Each hypothesis changes what evidence is meaningful. Under Hypothesis A, the absence of VPN logs is damning. Under Hypothesis B, the absence of VPN logs is trivial, maybe the user is on their home network. The same data carries different weight depending on which theory you are testing.

This is the reason Analysis of Competing Hypotheses from intelligence tradecraft works. It forces you to look for the absence of expected evidence, not just the presence of confirming evidence. The hypothesis with the least contradicting evidence is the strongest. Not the one with the most confirming evidence. Confirmation is cheap. Disconfirmation is diagnostic.

Where the Augmented Analyst Wins

This is the place in modern SOC work where AI earns its keep, if the analyst knows how to use it.

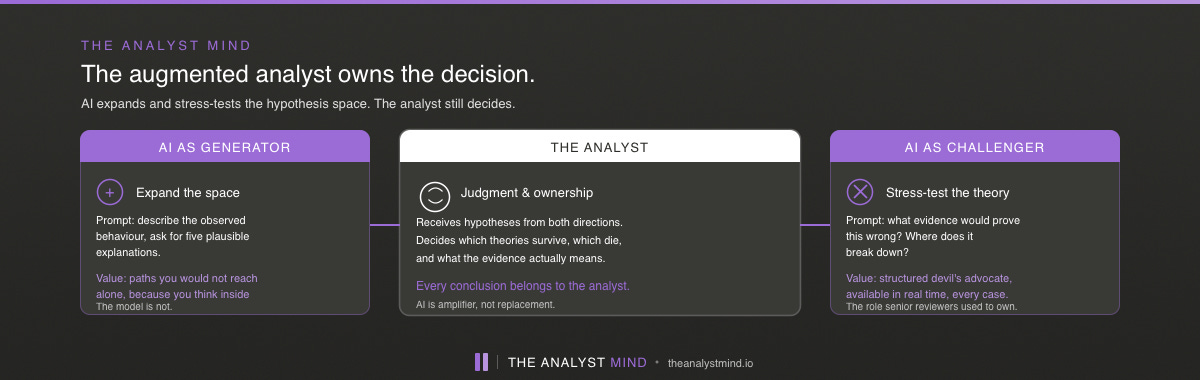

A well-prompted language model can do two things with hypotheses that no enrichment pipeline can do.

1. It can pre-fill the hypothesis space. You describe the observed behaviour and ask for five plausible explanations. The model returns a list. Some are obvious. Some are things you would have reached on your own. And sometimes, not always, but often enough to matter, one or two are paths you would not have considered. Not because you lack experience, but because you are thinking inside the constraints of your current case. The model is not. That breadth is the value.

2. It can challenge the hypothesis you already hold. You hand it your theory and ask: what evidence would prove this wrong? What alternative explanations fit the same facts? Where does this reasoning break down? Used this way, the model is a structured devil’s advocate — the role intelligence analysts have assigned to senior reviewers for decades, now available in real time.

Neither of these is a replacement for analyst judgment. Both are amplifiers.

The augmented analyst is not an analyst who defers to the model. The augmented analyst is one who uses the model to stress-test their own thinking and who still owns every conclusion. The AI expands the hypothesis space. The analyst decides which hypotheses are worth pursuing, which evidence actually supports them, and what the answer means for the business, the environment, and the decision at hand.

There is a trap here, and it is worth naming. If you prompt an LLM with your existing theory and ask it to help you build the case, it will - willingly and convincingly. The model is a compliance machine unless you deliberately instruct it otherwise. It will generate supporting reasoning, surface matching indicators, construct a coherent narrative. Every one of those outputs feels like progress. None of it is investigation. It is confirmation bias with machine-grade production values.

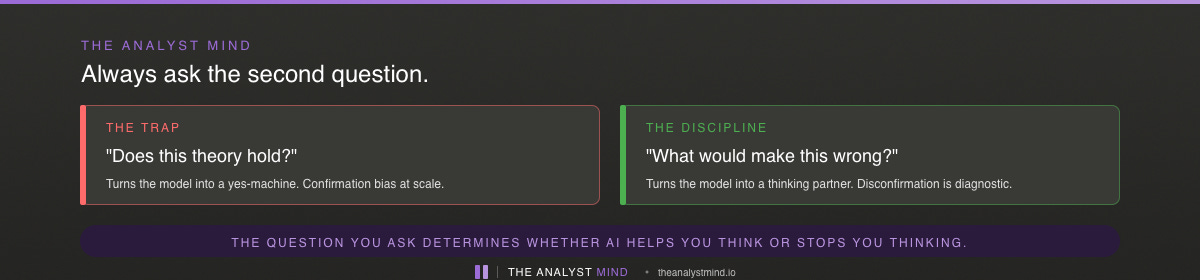

The discipline is to always ask the model the second question. Not just “does this theory hold?” but “what would make this theory wrong?” If you only ask the first, you are not using AI as a thinking partner. You are using it as a yes-machine.

Hypotheses Are Living Documents

Treat your hypotheses the way a scientist treats them: as things that must be written down, compared against evidence, and updated as the investigation develops.

Not held in your head.

The moment your hypotheses live only in your head, two things happen. First, you lose track of which ones you have actually considered and which ones felt obvious enough to skip. Second, you quietly revise your theory as new evidence comes in without noticing you are doing it. A phenomenon behavioural scientists call hindsight bias, and it is lethal to investigation quality.

Writing them down forces honesty. A hypothesis you wrote at 14:05 that no longer matches the evidence at 15:30 is a hypothesis you killed. That kill is useful. It tells you what you learned. An unwritten hypothesis that silently morphed to fit the new evidence teaches you nothing, because you were never testing it in the first place.

Your investigation notes should answer three questions at any moment:

What hypotheses am I currently considering?

What evidence supports or contradicts each one?

What would I need to see to rule any of them in or out?

If your notes do not answer those three questions, you are not building an investigation. You are building a timeline of your own attention.

Your Hypothesis Depends on Your Assumptions

Before you get too attached to any theory, pause on one question: what am I assuming about the data that this hypothesis depends on?

Your theory might be “the account was compromised at 14:32.” But if the timestamps you are reasoning from are in the wrong timezone, you are testing the wrong hypothesis entirely. A 14:32 UTC event interpreted as local time shifts the entire narrative. Suddenly the “suspicious login after hours” is a routine login during business hours. Your whole case collapses - not because the analysis was flawed, but because the assumption underneath it was.

This is the opening into the next post in this series. Hypotheses live or die by the timeline they are tested against. And timelines are harder to build than most analysts realise. That is where we go next.

The Starting Move

Starting tomorrow, make this the first thing you do after the W+H baseline is in place: write down at least two competing hypotheses in plain language. No jargon. No actor names. Just what you think might have happened, in sentences a peer could challenge.

If you cannot write two, you do not know enough to investigate yet. That is useful information. Go gather more context.

If you write two and one of them feels obviously correct before you examine the evidence, that is the hypothesis you should interrogate hardest. Not because it is wrong, but because “obviously correct” is the sound confirmation bias makes when it is working.

The evidence is not the investigation. The theories are the investigation. The evidence is how you choose between them.

Really interesting and actually helpful. I already saw myself scrolling one to many times. Especially when working tired