The 5W+H Questions

Structuring Your Thinking From Minute One

You are sixty seconds into an investigation. The alert fired. The ticket is open. The clock is running.

Most analysts do the same thing: they dive, straight into the logs, straight into the tool stack, straight into whatever data is closest. No structure. No sequence. Just motion.

Motion feels productive. It is not the same as progress.

The problem is not speed. The problem is that without a framework for what to ask first, the investigation is shaped by whatever data happens to land in front of you. The first log entry anchors your thinking. The first IOC becomes the theory. And from there, you are not investigating, you are confirming.

If you read the first post in this series, you recognise that pattern. Anchoring bias meets confirmation bias, sixty seconds in, before the analyst even knows it is happening.

There is a countermeasure. It is old. It is simple. It works:

Six Questions, One Framework

Who. What. When. Where. Why. How.

The 5W+H framework has been used in journalism, military intelligence, and law enforcement for decades. It is not clever. It is not novel. That is the point. Its value is that it forces completeness before it allows depth.

When you open an investigation with the 5W+H questions, you are not solving yet. You are mapping. You are building the landscape of the problem before you pick a direction to walk.

Who is affected? Who triggered the alert? Who owns the asset? Who else might be involved?

What happened? What did the detection actually fire on? What is the observable behaviour, stripped of the tool’s interpretation?

When did it start? When was it detected? Is there a gap between those two? What was happening in that gap?

Where in the environment? Which system, which network segment, which cloud tenant? Where does this asset sit in your architecture, and what can it reach?

Why does this matter? Why would an attacker target this? Why now? Or why might this be benign?

How did it happen? How did the activity occur technically? How was access gained, or how was the process initiated?

None of these questions are hard. All of them are missed — routinely — when analysts skip straight to hypothesis.

The Blank Page Problem

Here is where it gets practical.

One of the biggest friction points in investigation work is the blank page. A ticket opens. The analyst stares at an empty notes field and a pile of raw telemetry. Where do you even start?

This is where automation earns its place, not as a replacement for the analyst, but as a pre-fill for the obvious.

A well-configured SOAR playbook, enrichment pipeline, or even an LLM integration can answer a significant portion of the 5W+H questions before the analyst touches the case. The factual, contextual baseline: Who is this user? What is their role? What asset is this? Where does it sit in the network? When did the alert fire, and what is the detection logic behind it? What does the raw event look like?

That pre-fill is valuable. It eliminates the blank page. It gives the analyst a starting point that is structured, not random. Instead of diving into logs with no direction, the analyst opens a case and sees a partially completed 5W+H matrix, a map with some terrain already sketched in.

This is the kind of work automation was built for. Gathering context. Correlating identifiers. Pulling asset data and user profiles. Fast, repeatable, boring. Exactly the tasks that should not consume analyst time.

But here is the part that matters more than the efficiency gain.

The Answers Are Not Settled

The pre-filled 5W+H is a starting point. It is not the investigation. Every line is a premise to question, not a fact to accept.

The moment you treat the automated answers as settled, you have swapped one blind spot for another, a page so complete it stops you from asking the questions that matter.

Think about what happens. Automation says the “Who” is a specific user. The analyst accepts that and moves on. But what if the account was compromised? Then the “Who” is not the user — it is an attacker using stolen credentials. The initial answer was technically correct (this is the account) and operationally misleading (this is not the person).

The “What” might say “suspicious login from unusual location.” That is the alert summary. But the actual “What” might be the third step in a multi-stage intrusion that started days earlier. The alert is not the event — it is the symptom that finally crossed a detection threshold.

Military intelligence analysts are trained to challenge their own assessments as rigorously as they build them. The 5W+H framework operates the same way. Each question is asked at least twice: once to establish the baseline, and again as the investigation develops to test whether the baseline still holds.

This is where you earn your value. Not in gathering the initial facts, automation handles that. In asking the second-order questions that automation cannot reach.

Who — beyond the account name, who actually performed this action? Is there evidence of shared credentials, compromised tokens, delegated access?

What — beyond the alert description, what is the full sequence of activity? What happened before and after the detection? What did the attacker do that the detection did not catch?

Why — beyond “it looked suspicious,” why would an attacker choose this path? What is the strategic value of this asset, this account, this timing? Or, what benign explanation accounts for all the evidence, not just most of it?

These are judgment questions. They require context that no enrichment pipeline carries: knowledge of the business, understanding of the environment’s normal patterns, awareness of what happened last week and what is planned for next week. The analyst brings this. The tool does not.

A Bias Check Built Into the Structure

There is a second benefit to the 5W+H framework that goes beyond investigation efficiency.

The structure itself is a bias countermeasure.

When you force yourself to answer all six questions — not just the ones that feel relevant — you are resisting the pull of confirmation bias. Your hypothesis might explain the What and the How beautifully. But can it explain the When? Does the timing actually make sense? And the Where, does the affected system fit the theory, or did you quietly ignore that the activity originated from a segment that contradicts your hypothesis?

The 5W+H questions create a completeness check. They make the gaps visible. And gaps — missing answers, questions you cannot explain — are often more diagnostic than the data you have.

The questions you cannot answer tell you more than the ones you can.

This is why the framework matters even for experienced analysts who think they do not need a checklist. Especially for experienced analysts. Experience builds pattern recognition, but it also builds assumptions. The 5W+H framework forces those assumptions into the open where they can be examined.

Combine this with a habit from earlier in this series. The deliberate pause: that moment before closure where you stop confirming and start challenging. Before you close the case, revisit each 5W+H. Has the answer changed since the investigation started? If your final “Who” is the same as your initial “Who”, are you confident because you verified it, or because you never questioned it?

The Handoff Model

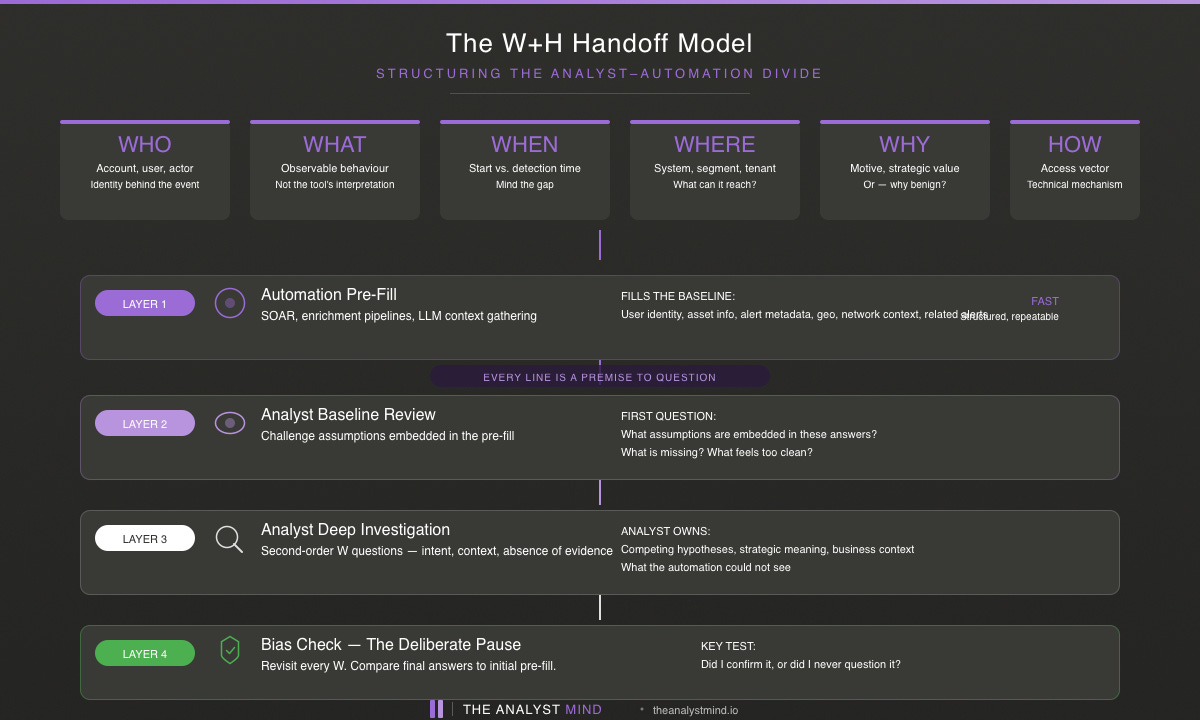

So the practical model looks like this:

Layer 1 Automation: Pre-fill the 5W+H matrix with factual, contextual data. User identity, asset information, alert metadata, geolocation, network context, related recent alerts. Fast. Structured. Consistent across every case.

Layer 2 Analyst baseline review: Read the pre-fill. Your first question is not “does this look right?” Your first question is: what assumptions are embedded in these answers? What does the automation not know? What is missing? Flag anything that feels incomplete and flag anything that feels too clean.

Layer 3 Analyst deep investigation: Pursue the second-order W questions. Test whether the initial answers survive scrutiny. Build competing explanations. Look for what the automation could not see: intent, context, strategic meaning, absence of expected evidence.

Layer 4 Bias check: Before closing, walk the full 5W+H one final time. Compare your final answers to the initial pre-fill. Where they differ, you learned something. Where they match, verify that you actually confirmed them, rather than simply never challenged them.

This is not a workflow diagram for a SOAR platform. It is a thinking model. The automation handles the context gathering so the analyst can focus on the cognition. That is the division of labour that actually works.

What Comes Next

The 5W+H framework gives you a structure for the first minutes. But an investigation is more than its opening. In the next post, we will tie everything together — the bias countermeasures, the reasoning chain, the 5W+H questions — into a full investigation template. A single, practical document that carries you from alert to closure with structured thinking at every stage.

The tools will change. The thinking compounds.